How to Write Prompts That Don't Drift

Keeping your AI on track from line 1 to line 10,000. A mechanical look at why long-context generation degrades, and how to structure prompts that maintain their constraints across extended outputs.

Keeping your AI on track from line 1 to line 10,000. A mechanical look at why long-context generation degrades, and how to structure prompts that maintain their constraints across extended outputs.

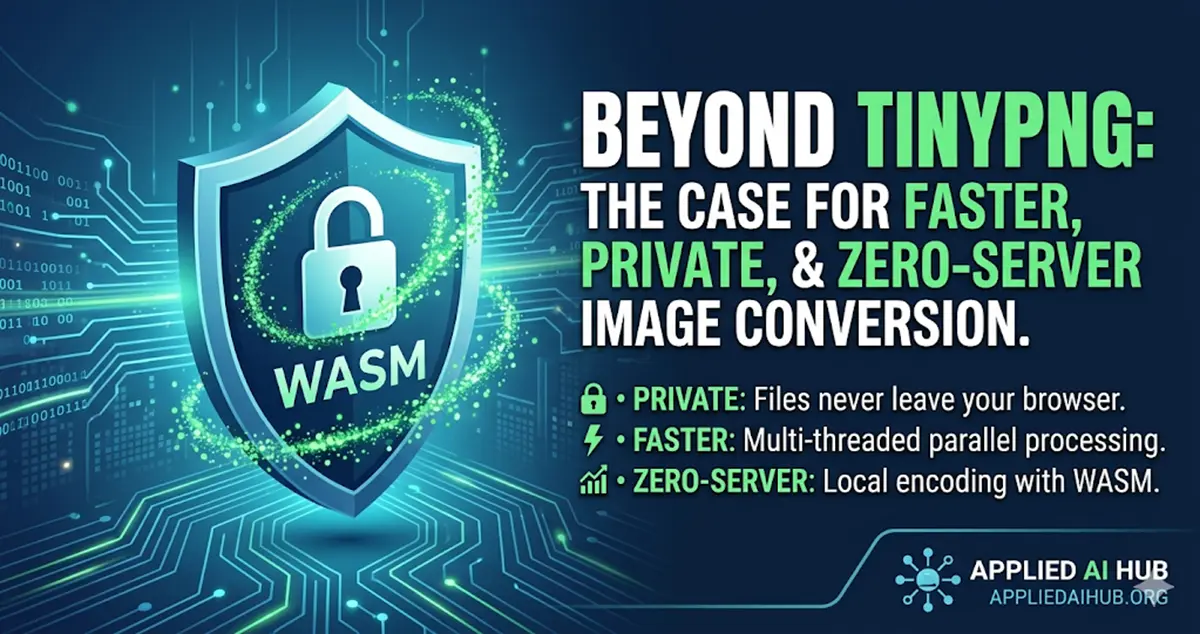

Why move your image optimization to the browser? A deep dive into PNG to WebP and AVIF conversion with a focus on privacy, speed, and batch processing.

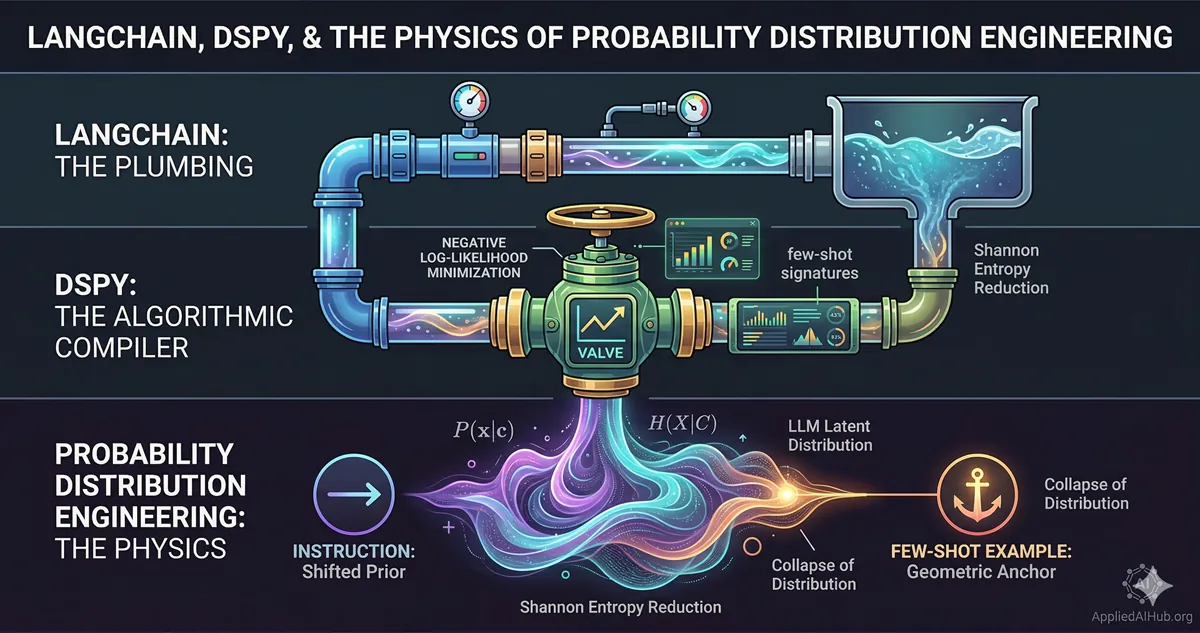

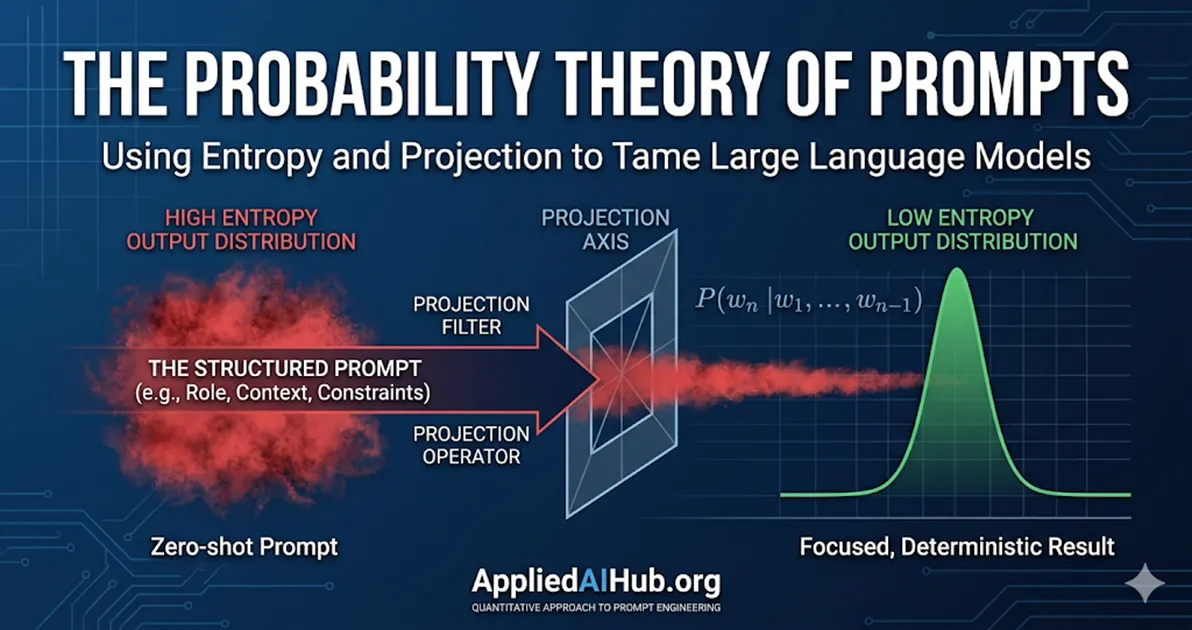

LangChain connects your pipes. DSPy optimizes your valves. But neither tells you why the fluid behaves the way it does. A first-principles look at what you are actually doing when you prompt an LLM.

Stop treating LLMs like conversational partners. A prompt is a mathematical projection operator that collapses a high-dimensional probability space into a deterministic, predictable path.

Everyone says keep prompts short. That advice ruins the output. Here's the rule that actually determines whether you get a usable result or a generic non-answer — and it has nothing to do with length.

Designing prompts for AI agents requires a fundamentally different mental model than prompting for answers. This guide covers the system prompt architecture, action instructions, failure handling, and output schemas that make agentic AI reliable.

A practical scoring rubric for prompt engineers who want to move beyond gut feel and build a systematic way to assess, compare, and improve their prompts before running them.

The most common errors beginners and experts repeat when prompting AI — and the specific fixes that eliminate them. A practical breakdown with before/after examples.

Why the 'just talk to it normally' crowd is missing the point. A clear-eyed look at what prompt engineering actually is, why it still matters, and what will and won't be automated away.

How to get more accurate answers from AI systems connected to your data. Most RAG failures are prompt failures — here's how to fix them.

Discover why companies are paying up to $300K/year for advanced prompt engineering skills, the real ROI of AI automation, and what they're truly building.

AI hallucinations aren't random — they're triggered by how you ask. A handful of prompt structures reliably shift the model from confident fabrication to honest uncertainty.

Ran the same structured prompt on GPT-4o, Claude 3.5 Sonnet, and Gemini 1.5 Pro. The results are more nuanced than any benchmark will tell you.

Single prompts hit a ceiling. Prompt chaining breaks complex tasks into sequential steps where each output feeds the next — turning a model into a reliable, multi-stage process.

A plain-English guide to the LLM parameters that actually matter — what temperature and top-p do mechanically, how they interact, and how to set them deliberately instead of leaving them at defaults.

Explore the hidden layer behind every great AI assistant. Learn what a system prompt is and why understanding it changes how you interact with LLM models.

Most image generation problems are prompt problems. A practical breakdown of how Midjourney and DALL-E interpret your text — and how to write prompts that produce exactly what you visualized.

10 prompt templates that replace hours of brainstorming — practical, copy-paste-ready prompts for briefs, outlines, drafts, rewrites, SEO copy, social content, email sequences, and more.

The dark side of prompt engineering every developer must understand — what prompt injection is, how attackers exploit it, and what you can actually do to defend against it.

Why context is the secret ingredient to better AI outputs — and the specific ways to provide it so the model stops guessing and starts working on your actual problem.

Why telling ChatGPT 'you are an expert' actually works — and how to do it with enough precision to matter. A practical guide to role prompting and the mechanics behind it.

Role, Task, Goal, Output — a four-part structure for writing AI prompts that get specific, usable results. A practical guide to the RTGO framework and why each component earns its place.

Chain-of-thought prompting forces AI to reason step-by-step rather than jump to conclusions. Here's how it works mechanically, when to use it, and what most tutorials get wrong about it.

When should you give an AI model examples, and when should you trust it to figure things out? A practical breakdown of zero-shot and few-shot prompting — what each does mechanically, and how to choose between them.

What separates a vague request from a result that actually works. A practical breakdown of every structural element that determines LLM output quality — and how to control each one deliberately.

Discover why traditional search queries fail with large language models, and learn how providing context actually works to control probability distributions.

What you actually need to know in 2026, explained without jargon. A practical, no-nonsense guide to understanding AI, choosing the right tools, and getting real value from them — starting today.

The technique that makes AI actually remember your documents. A practical, non-technical explanation of RAG architecture, when to use it, its real-world applications, and honest limitations.

Discover 10 powerful AI tools I use every day for content creation and development. All completely free, with no affiliate links. Just tools that actually work.

Generative Engine Optimization (GEO) is the new SEO. Learn what this shift means for your content strategy, why old rules fail, and exactly how to adapt today.

An analysis of the privacy risks hidden in digital photo metadata, why standard precautions fail, and how client-side architecture offers a trustless solution for image sanitization.

A comprehensive risk analysis of browser-based image optimization. Learn how local-first tools protect client data, eliminate upload latency, and satisfy strict NDA requirements for designers and developers.

An analysis of the structural friction in modern cloud-based writing tools, and how local-first architectures like Markdown Ink return control, privacy, and speed to the individual user.

Discover Markdown Ink, a distraction-free, privacy-first editor that runs entirely in your browser. Write, preview, and export to PDF without any data leaving your device.

Learn how invisible EXIF metadata puts your digital privacy at risk, and why our new local-first, browser-based tool is the safest way to clean your images.

Analyzing the shift from fixed cloud costs to variable token billing, and why systematic cost forecasting is essential for sustainable AI production at scale.

Explore our deep dive into managing API costs for OpenAI, Anthropic, and Google models. Learn how our free LLM Cost Calculator simplifies AI budget planning.

Stop uploading client assets to random servers. Learn why local-first image compression is safer, faster, and the new standard for professional web development.

A deep dive into Dan Koe's AI-powered content creation workflow - from research to newsletter to multi-platform distribution - and how you can build your own scalable content system with large language models.

A practical guide on how AI systems automate human workflows, why understanding Skills is crucial, and how to leverage AI as an automation assistant rather than just a chat tool.

Read a clear analysis of what AI systems actually do versus what they appear to do, and understand why this critical distinction matters for all builders.